Wasserstein-2 Generative Networks

1Skolkovo Institute of Science and Technology2Information Technologies, Mechanics and Optics University

arXiv 2019

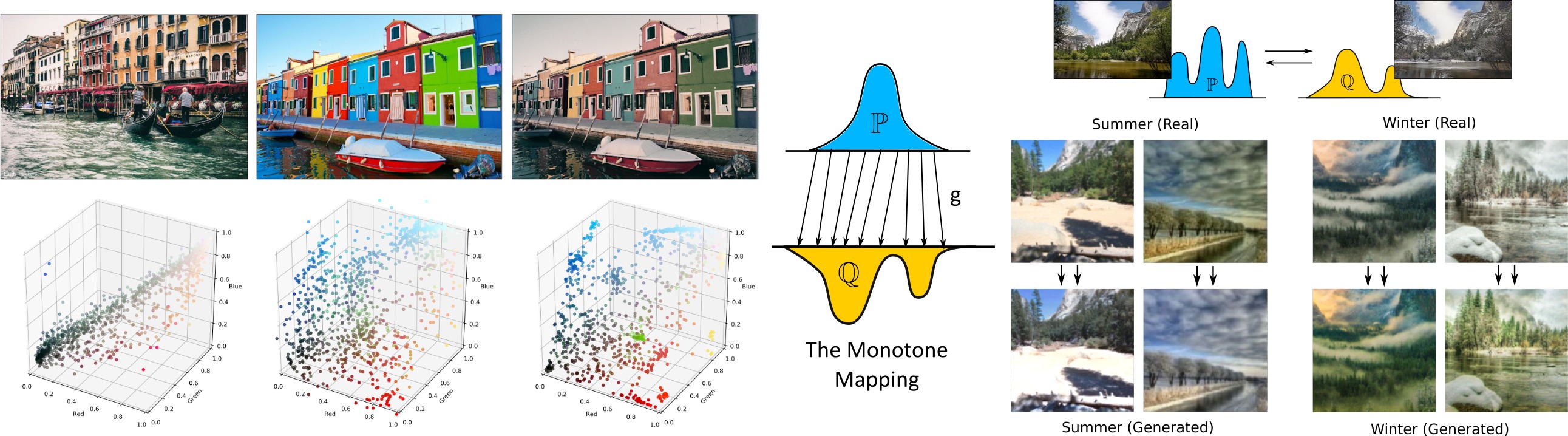

Examples of algorithm applied to the problems of image-to-image color transfer on the left, unpaired image-to-image style transfer on the right.

Abstract

Generative Adversarial Networks training is not easy due to the minimax nature of the optimization objective. In this paper, we propose a novel end-to-end algorithm for training generative models which optimizes a non-minimax objective simplifying model training. The proposed algorithm uses the approximation of Wasserstein-2 distance by using Input Convex Neural Networks. From the theoretical side, we estimate the properties of the generative mapping fitted by the algorithm. From the practical side, we conduct computational experiments which confirm the efficiency of our algorithm in various applied problems: image-to-image color transfer, latent space optimal transport, image-to-image style transfer, and domain adaptation.Materials

Contact

If you have any questions about this work, please contact us under a.korotin@skoltech.ru.