Monocular 3D Object Detection via Geometric Reasoning on Keypoints

1Skolkovo Institute of Science and Technology2Yandex

arXiv 2019

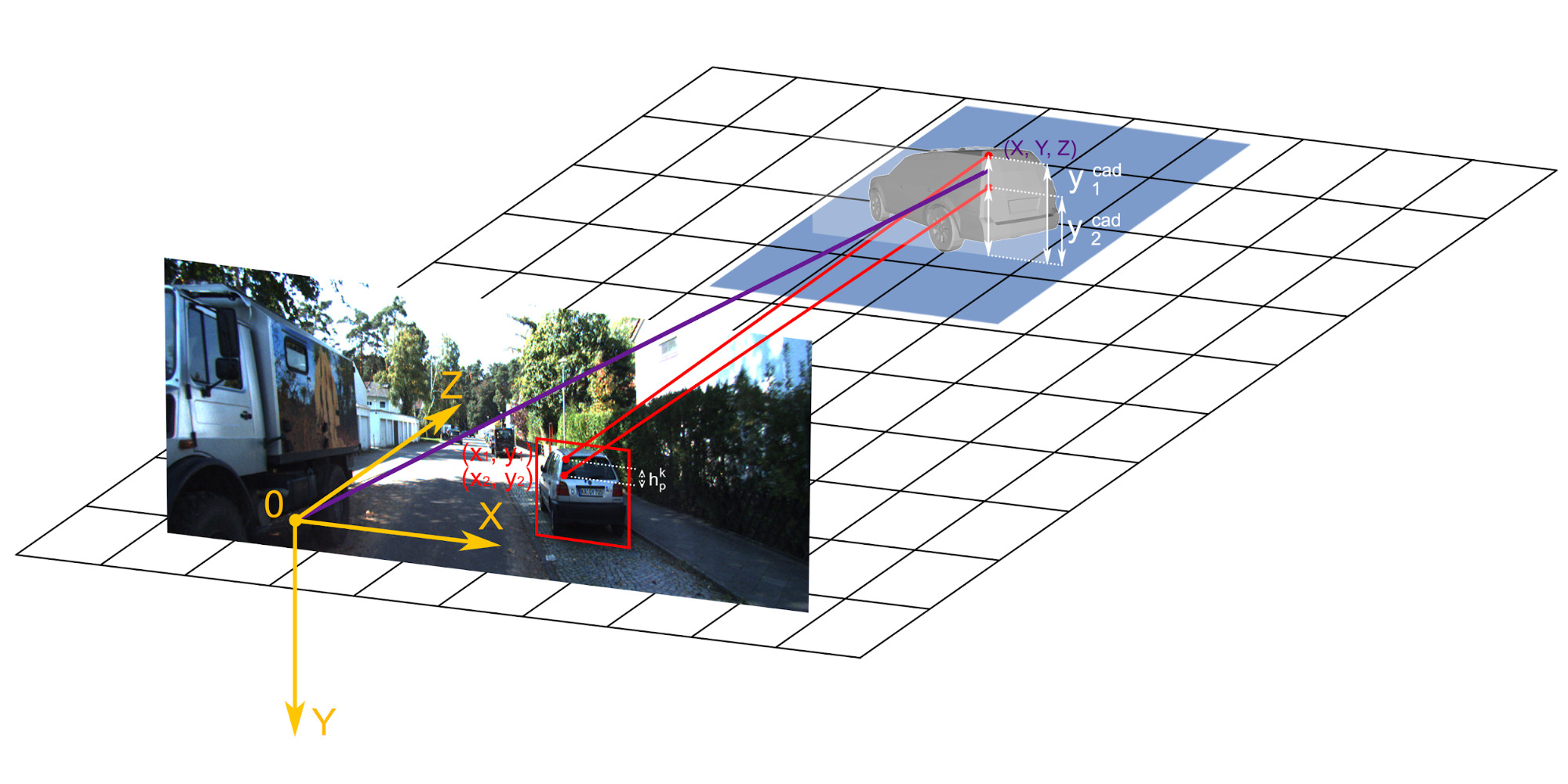

Geometric reasoning about instance depth. We use camera intrinsic parameters, predicted 2D keypoints and dimension predictions to ”lift” the keypoints to 3D space.

Abstract

Monocular 3D object detection is well-known to be a challenging vision task due to the loss of depth information; attempts to recover depth using separate image-only approaches lead to unstable and noisy depth estimates, harming 3D detections. In this paper, we propose a novel keypoint-based approach for 3D object detection and localization from a single RGB image. We build our multi-branch model around 2D keypoint detection in images and complement it with a conceptually simple geometric reasoning method. Our network performs in an end-to-end manner, simultaneously and interdependently estimating 2D characteristics, such as 2D bounding boxes, keypoints, and orientation, along with full 3D pose in the scene. We fuse the outputs of distinct branches, applying a reprojection consistency loss during training. The experimental evaluation on the challenging KITTI dataset benchmark demonstrates that our network achieves state-of-the-art results among other monocular 3D detectors.Materials

Contact

If you have any questions about this work, please contact us under adase-3ddl@skoltech.ru.